Banking API calls are projected to rise from 137 billion in 2025 to 722 billion by 2029, a 427% increase according to Juniper Research's open banking forecast. That changes the developer conversation.

Banking api integration used to be a specialist task for a small subset of fintech teams. It isn't anymore. If you build B2B software that touches payments, treasury data, reconciliation, lending workflows, onboarding, or embedded finance, you're already dealing with the same core problem: too many systems, too many edge cases, and too much maintenance if you integrate each source one by one.

The part many teams underestimate is that banking fragmentation looks a lot like eCommerce fragmentation. Different platforms expose similar business objects through different schemas, auth flows, event models, and operational limits. The teams that move fastest don't “code harder.” They abstract complexity early.

The New Frontier of Banking API Integration

Open banking moved banking data access from private, tightly controlled integrations toward consent-based connectivity. In practical terms, that means your application can access account information or initiate payments when the user grants permission and the regulatory model allows it.

For developers, the terms matter:

- AISP usually means your app reads account and transaction data.

- PISP usually means your app initiates a payment on the user's behalf.

- Consent flow is the sequence that proves the user approved the access your app is requesting.

Those definitions are simple. The implementation isn't.

Fragmentation is the real problem

The hardest part of banking api integration usually isn't making one API call. It's building a system that survives differences across banks, versions, field names, auth requirements, and uptime behavior. One institution may return pending and booked transactions in separate structures. Another may combine them. One may issue refresh tokens with a long lifecycle. Another may force frequent re-consent.

That's why a unified API strategy matters. Instead of coupling your product logic directly to each bank's quirks, you place a stable abstraction layer between your app and the institutions you connect to.

This is the same architectural lesson many B2B eCommerce teams already know. A vendor integrating with dozens of carts and marketplaces doesn't want product sync, order import, and inventory logic rewritten for every platform. The same thinking applies in finance. A single integration layer can protect your core product from schema drift and operational inconsistency. API2Cart makes that point from the commerce side in its open banking API overview.

Practical rule: Treat bank connectivity as infrastructure, not as the place where your product should express business uniqueness.

What changes for B2B SaaS teams

If you build an ERP connector, reconciliation tool, expense platform, cash flow dashboard, or commerce-adjacent finance product, you're now operating at a time when API volume is climbing fast and customer expectations are climbing with it.

That creates three immediate engineering consequences:

- You need a bank-agnostic domain model. If your internal transaction object mirrors one provider too closely, migration and expansion get painful.

- You need consent-aware workflows. Account linking and payment authorization aren't just technical events. They affect UX, retries, and support.

- You need operational visibility. In banking, a timeout is never “just a timeout.” It may block money movement, delay downstream bookkeeping, or create duplicate user actions.

The teams that succeed usually accept one truth early: direct integrations can work, but fragmentation will tax every future release.

Designing Your Integration Architecture

Roughly 30% of integration failures come from mismatched bank data formats, according to Group107's banking integration guidance. That is not a parsing nuisance. It is an architecture problem.

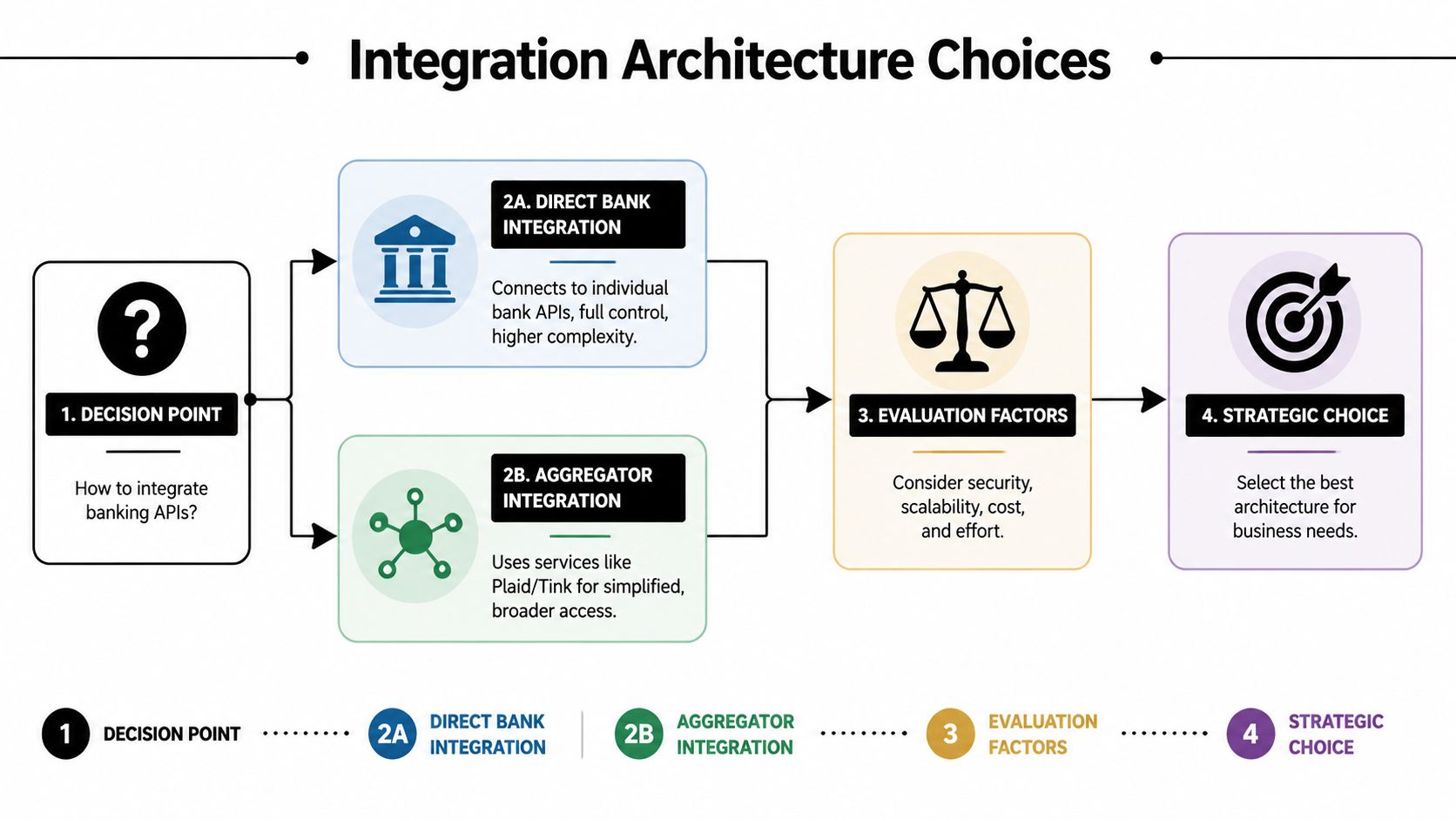

Teams usually face one early decision. Connect directly to each bank, or put a unifying layer between your product and the institutions. I have seen both succeed. I have also seen direct integrations look cheaper for the first two banks, then become the most expensive part of the roadmap by bank five.

Direct integration versus abstraction

The trade-off is straightforward:

| Model | What you gain | What you pay for |

|---|---|---|

| Direct bank integration | Fine-grained control over flows, payload handling, and release timing | Ongoing maintenance, duplicated normalization logic, institution-specific auth behavior |

| Unified or aggregator layer | Broader coverage, a more consistent API surface, faster delivery for new institutions | Less exposure to provider-specific features and edge-case behavior |

The eCommerce parallel clarifies the stakes here. A team building against many shopping carts learns quickly that rewriting catalog sync, order import, and inventory updates for every platform wastes engineering time. Banking integration has the same fragmentation pattern. Account data, consent flows, refresh logic, and error models vary just enough to create permanent drag. A unified API strategy contains that variability instead of letting it spread through product code.

OAuth with PKCE belongs near the core

Consent and authentication design should sit near the center of the architecture, not as an adapter detail. Group107 notes that effective implementations prioritize OAuth 2.0 with PKCE for consent flows and can exceed a 95% success rate on happy-path flows.

PKCE matters because public clients and browser-based flows are exposed to authorization-code interception risks. The implementation pattern is usually simple on paper:

- The app starts a consent request.

- The user is redirected to the bank authorization page.

- The user authenticates and approves access.

- The bank returns an authorization code.

- The backend exchanges that code for tokens and stores them securely.

- Background services use the granted scopes for allowed reads or payment actions.

The hard part starts after the first successful login.

Refresh token rotation, consent expiry, partial scopes, and institution-specific timeout behavior all affect support load and user trust. If those concerns live in each connector, every bank adds another place for auth bugs to hide. If they live behind a stable integration boundary, product teams can work with one consent state machine and one retry model.

Normalize once, close to ingestion

A banking integration matures when the internal model stops mirroring any single bank.

Normalize as data enters the platform, version the canonical schema, and keep bank-specific quirks inside the connector and mapping layers. If one institution uses booking date and another uses value date, resolve that distinction before reconciliation logic sees the record. If account subtype labels differ, map them once. If optional fields are missing, make that absence explicit in the domain model instead of forcing every feature team to rediscover it.

A practical four-layer split works well:

- Connector layer for institution-specific auth, transport, and rate-limit handling

- Normalization layer for mapping external payloads into internal schemas

- Domain layer for reconciliation, categorization, cash flow logic, and payment orchestration

- Delivery layer for UI views, exports, webhooks, and downstream consumers

If business logic branches on bank identity, the abstraction boundary is too high.

For teams with multi-platform commerce experience, this should feel familiar. The same pattern appears in shopping cart integrations. API2Cart's API proxy server architecture example shows the proxy approach clearly: isolate source-system differences behind a stable contract so feature work does not reopen transport and mapping decisions every sprint.

A practical decision framework

Choose direct integrations if the institution list is small, the product depends on bank-specific capabilities that a unified layer cannot expose, and the team can support connector maintenance for years, not quarters.

Choose a unified layer if coverage, delivery speed, and consistency matter more than bank-specific customization. That is the right call for many first major banking projects, especially for SaaS products that need to onboard new customers quickly without rebuilding consent, sync, and reconciliation logic each time.

Architecture choices also affect operations. A thin abstraction with clear ownership boundaries makes audits, incident review, and failover planning easier. That maps closely to broader requirements around operational resilience for financial institutions, where teams need to prove not only that integrations work, but that they fail in controlled and observable ways.

Security work in fintech isn't a final review step. It shapes the integration from the first diagram onward.

A banking integration fails long before a breach if developers treat compliance as paperwork instead of implementation detail. Audit requirements become code requirements. Access policies become service boundaries. Logging becomes evidence.

What compliance means in code

In multi-API banking projects, 35% of EU projects face PSD2 non-compliance challenges, and SoftTeco's integration analysis points to FAPI 2.0, mutual TLS, and signed requests with JWS as critical controls for auditing calls and avoiding those pitfalls.

That translates into concrete engineering tasks:

- Use strong client authentication. If the environment requires mutual TLS, implement certificate lifecycle handling as a first-class operational process.

- Sign requests where required. Don't bolt message signing on after the transport layer is already in production.

- Audit every sensitive action. You need an immutable trail for consent creation, token exchange, payment initiation, and privileged admin access.

- Encrypt data in transit and at rest. This is basic, but teams still fail by leaving internal service hops underprotected.

- Store secrets outside app code and deployment configs. Secret rotation needs an owner and a process.

Strong Customer Authentication affects product design

SCA isn't just a legal phrase. It changes how users move through your app.

When your flow requires step-up authentication, your frontend needs to preserve state across redirects, handle abandoned sessions, and clearly explain why the user is leaving your interface. Your backend needs to match the returning session safely and resume the transaction without ambiguity.

If you don't design this well, support sees the symptoms first:

- Users think payment initiation “hung”

- Repeated clicks trigger duplicate attempts

- Consent expires and nobody understands why the data stopped syncing

That's not only a UX issue. It becomes an operational issue.

Implementation note: Never let compliance-sensitive transitions become invisible background behavior. If the user must re-authenticate or re-consent, your app should state that clearly and recover gracefully.

Logging, access control, and operational proof

Good fintech logging is selective and accountable. You need enough detail to reconstruct a flow, but not so much that logs become a liability. Don't log raw secrets, full tokens, or sensitive payloads you can avoid storing. Log identifiers, event types, timestamps, state transitions, and trace references.

Use role-based access for internal tooling. Production support doesn't need unrestricted access to customer-linked financial operations. Separate duties where possible. Make admin actions traceable to a person, not a shared account.

For teams tightening governance around availability, incident response, and third-party dependencies, this overview of operational resilience for financial institutions is worth reading because it maps the resilience conversation to the controls auditors increasingly expect.

Don't build insecure convenience layers

A common mistake is creating an internal “shortcut” service that stores broad credentials and exposes loose endpoints to speed up development. It feels efficient at first. It usually becomes the weakest part of the stack.

Instead, keep the contract narrow:

- Request only the minimum scopes

- Segment services by responsibility

- Expire sessions and tokens appropriately

- Require explicit authorization checks in internal APIs

If you need a concise refresher on the mechanics behind auth choices, API2Cart's piece on authentication in API integrations is a useful parallel because the same principle holds across banking and commerce: authentication design determines how safely the rest of the integration can evolve.

Building for Resilience and High Volume

Most integrations look fine in sandbox conditions. Real trouble starts when networks wobble, providers slow down, and users repeat actions because the UI hasn't updated yet.

Banking API integration ceases to be merely an API exercise and evolves into a distributed systems problem.

Build around failure, not around demos

A recent review of banking integration challenges notes a practical gap in performance and scaling guidance. It highlights that 30% of fintechs face latency issues, and that a hybrid polling and webhook strategy outperforms pure real-time models for global reliability in high-volume environments, as discussed in Octal's analysis of banking API integration challenges.

That matches what production systems usually teach the hard way. Pure webhook models are elegant until events are delayed, dropped, or arrive out of order. Pure polling models are predictable until they become slow, expensive, or rate-limited.

The answer is usually hybrid:

- webhooks for fast notification

- polling for reconciliation and gap filling

- idempotent ingestion so duplicates don't break state

Idempotency is not optional

If your integration can initiate payments or create downstream records, every write path needs an idempotency strategy. That means a client-generated key or equivalent request identifier, stored and checked before execution.

Without it, a timeout creates a dangerous ambiguity. Did the provider process the request or not? If your system retries blindly, you can create duplicate payment attempts or duplicate accounting entries.

A reliable pattern looks like this:

- Generate a unique request key before the outbound call.

- Persist intent and request metadata.

- Send the request with that key.

- On retry, reuse the same key.

- Reconcile final provider status asynchronously if the immediate response is unclear.

A timeout after submission is not a green light to resend as a new request.

Error handling that survives production

Happy-path code isn't integration code. You need explicit strategies for transient failures, provider-side issues, malformed payloads, and partial data availability.

Use these patterns together:

- Exponential backoff for temporary failures and rate limiting

- Circuit breakers so one unstable endpoint doesn't drag down your entire sync pipeline

- Dead-letter queues for records that need manual or delayed reprocessing

- Structured retries that distinguish safe-to-retry reads from dangerous write retries

- Observability with traces that connect the user action, the internal service call, and the provider response

A practical resilience checklist:

| Failure mode | What to do |

|---|---|

| Rate limit response | Respect provider retry guidance, slow the worker, and prevent retry storms |

| Temporary provider outage | Open the circuit, queue recoverable work, and surface degraded status internally |

| Webhook missed or delayed | Backfill via polling and reconcile by updated timestamps or transaction markers |

| Incomplete payload | Preserve raw response, mark the record for normalization review, avoid crashing the whole batch |

The eCommerce parallel is useful here

Commerce integrations taught this lesson years ago. Real-time where possible, scheduled reconciliation where necessary, and normalized state in the middle. The same pattern works for banking because the operational problem is similar: many sources, inconsistent event quality, and business logic that can't tolerate silent drift.

That's also where API2Cart can help teams building commerce-adjacent financial products. It gives B2B vendors a unified way to connect with shopping carts and marketplaces through one API, with webhooks where supported and list methods with date filters for polling-based sync. If your product spans both commerce data and banking workflows, that kind of unified layer reduces the number of moving parts your team has to own directly.

Common Pitfalls and Strategic Mitigation

The biggest mistake in banking api integration is thinking the work ends when the first institution goes live. It starts there.

The traps teams keep repeating

A recurring challenge is legacy infrastructure. As summarized in Kitrum's discussion of open banking integration challenges, integrations with core systems can take many months because of poor documentation and internal silos, and middleware adoption is rising because it reduces third-party risk and hides source-system complexity.

That surfaces in a few predictable failure modes:

- Normalization gets deferred. Teams pass provider payloads straight into product code to move faster. Later, every feature carries connector-specific conditionals.

- Token lifecycle is treated as an edge case. Initial consent works in test. Re-authentication and expiry handling are weak in production.

- API changes are discovered by customers. No one owns schema monitoring, changelog review, or canary validation.

- Sandbox confidence is overestimated. Test environments rarely reproduce the timing, data quality, and operational noise of production.

What mitigation actually looks like

The durable fix is abstraction plus discipline.

Create a middleware or integration layer with a narrow contract. Make one team or one clearly owned service responsible for provider adaptation. Add schema validation at ingestion. Test error paths as seriously as success paths. Keep production telemetry close to the connector layer so failures aren't buried inside generic application logs.

Use a short operational loop:

- detect changes early

- isolate them inside the connector boundary

- keep your domain model stable

- expose only normalized events and records downstream

Integration is a lifecycle. Build for version drift, token churn, and partial failure from the first release.

If your architecture doesn't assume change, the banks will force that lesson later.

Your Blueprint for Successful Integration

The banking API market is projected to grow at a 11.5% CAGR through 2035, and unified API strategies are associated with 20 to 30% gains in development efficiency and 15% cost reductions, according to OMR Global's API banking market summary. That's the business context. The engineering lesson is more concrete: complexity compounds unless you contain it.

The best integration plans usually come from lessons learned the expensive way.

Lessons worth keeping

- Own the abstraction early. If every feature team talks directly to external banking endpoints, your product logic turns into connector maintenance.

- Design auth as a product flow. Consent, redirects, token refresh, and re-authentication affect reliability as much as security.

- Normalize once. A canonical internal model is cheaper than endless provider-specific exceptions.

- Expect partial failure every day. Timeouts, retries, duplicate events, and delayed reconciliation aren't rare events in distributed finance systems.

- Treat documentation as an engineering asset. Clear internal contracts, error semantics, and sequence diagrams reduce regressions and support load. If your team needs a practical model for that, this expert guide to API documentation is useful.

What works versus what doesn't

What works is a narrow integration boundary, explicit observability, and a unified strategy wherever source-system fragmentation is high.

What doesn't work is assuming the first successful connection proves the architecture. It only proves the demo.

For developers building multi-system B2B software, that last point matters beyond finance. The same reasoning that stops a commerce team from writing separate long-term connectors for every cart and marketplace should shape banking decisions too. A unified layer won't remove all complexity, but it moves that complexity into a place where it can be tested, monitored, and changed without destabilizing the rest of the product.

If you build B2B software that needs commerce connectivity alongside financial workflows, API2Cart gives you a unified API for connecting to shopping carts and marketplaces without maintaining separate integrations for each platform. That can simplify the non-banking side of your stack so your team can spend more time on reconciliation logic, payments workflows, and operational resilience instead of connector plumbing.