Your overnight sync didn’t fail because the API was “random.” It failed because your integration drove too fast on a road with rules you didn’t model.

That’s the common pattern in eCommerce integrations. Orders import fine in testing. Product updates look stable at low volume. Then a store goes busy, a marketplace starts lagging, your worker pool fans out, and the logs fill with retries, partial imports, and a few 429 Too Many Requests responses buried between timeout errors. By morning, support has a ticket about missing orders, stale stock, or duplicate updates.

Developers usually debug the wrong layer first. They inspect auth, payload shape, webhook delivery, pagination, even database locks. Those all matter, but api rate limit behavior is often the silent governor behind the bigger failure. One connector gets throttled, the queue backs up, retries amplify traffic, and a localized throttle becomes a system-wide sync delay.

The hard part is that rate limiting rarely announces itself in a friendly way. Some APIs return clean headers. Some return only a status code. Some allow bursts but punish repetition. Some document one policy and enforce another in practice under load. Financial APIs make this painfully explicit. Interactive Brokers, for example, enforces 60 requests within any ten-minute period for historical data, blocks identical historical requests within 15 seconds, flags six or more requests for the same contract, exchange, and tick type within two seconds, and caps simultaneous open historical data requests at 50 according to Interactive Brokers historical data limitations.

That kind of rule set isn’t unusual in spirit. It’s just unusually well defined.

Reliable integrations come from treating rate limits as part of the contract, not as an edge case. If your product moves orders, inventory, prices, shipments, and catalog data across many carts and marketplaces, that shift in thinking prevents failed jobs and data discrepancies far more effectively than another round of ad hoc retries.

The Silent Failure of Your Data Sync

A familiar incident starts with an inventory sync that looked harmless. Your scheduler wakes up a batch job, fetches changed products, pushes updates to a few stores, then polls for confirmation. Nothing exotic. But one store has a sale running, another is backfilling orders, and your own retry logic starts stacking duplicate calls because one endpoint got slow.

The job doesn’t crash cleanly. It degrades.

A few requests get throttled. Then more workers retry at the same time. Some records update, some don’t. Your queue says the run finished, but the merchant sees stock mismatches because half the writes landed and half got deferred. If you rely on “retry three times and hope,” you often create a traffic spike exactly when the platform is already asking you to slow down.

Practical rule: A failed sync is often a traffic-shaping problem before it’s a business-logic problem.

This matters more in eCommerce than in simpler SaaS integrations because syncs are chained. A delayed order import affects fulfillment. A delayed inventory update affects oversell risk. A delayed price sync affects listing accuracy. Rate limiting isn’t just about one rejected request. It’s about how one rejected request ripples into stale data in adjacent workflows.

Developers usually notice rate limits late because the first symptom isn’t “you are rate limited.” It’s one of these:

- Backlog growth: Workers keep accepting work faster than they can complete it.

- Partial completion: A sync job finishes with quiet gaps instead of a hard failure.

- False timeout diagnosis: Slow responses get treated as network instability when the platform is throttling.

- Retry storms: Your own client multiplies load with synchronized retries.

The fix starts with a mindset change. Treat every external API like a toll booth. Cars can keep moving, but only at the pace the booth can process. If you send ten lanes of traffic into a two-lane checkpoint, congestion is your fault, not the road’s.

Why Rate Limits Are Critical in Multi-Cart eCommerce

Multi-cart integration work gets messy because each platform sets its own traffic rules. One API tolerates bursts. Another wants steady pacing. A marketplace might limit by method, while a shopping cart applies a broader tenant or app-level quota. If your product connects to many systems, you’re not managing one api rate limit. You’re orchestrating dozens of different ones at the same time.

That’s why multi-cart integrations break in ways a single-API app doesn’t. Your connector isn’t just driving one truck through one city. It’s routing a fleet through cities where every street has different speed limits, toll rules, and lane closures. The same polling cadence that’s safe for one platform may be enough to trigger throttling on another.

A documented example of this problem is stark. A 2026 GetKnit analysis of 50+ third-party APIs found that 70% of integration bugs stem from unhandled cross-API throttling, and cascading 429 errors cause 30-50% integration failure rates in high-volume polling across platforms with different limits, including examples such as Shopify’s 2 RPS leaky bucket and Amazon’s 1-5 RPS per method, as noted in Tyk’s overview of API rate limiting practices.

What makes eCommerce harder than a typical SaaS sync

A CRM sync often moves one category of object on a predictable cadence. eCommerce doesn’t.

You’re juggling:

- Orders: Time-sensitive and operationally critical.

- Inventory: Highly volatile, especially during promotions.

- Prices and listings: Often bulk-heavy and sensitive to sequence.

- Customers and shipments: Lower frequency, but still part of the same throughput budget.

These workloads collide. A surge in order imports can starve inventory updates if your integration doesn’t prioritize queues and shape traffic per connector.

What goes wrong when you ignore cross-platform throttling

Most integration failures don’t come from one bad request. They come from traffic coupling.

A simple pattern looks like this:

| Situation | What the code does | What actually happens |

|---|---|---|

| One cart slows down | Worker retries immediately | You create a burst against a stressed API |

| Multiple stores sync at once | Shared pool fans out evenly | The strictest API becomes the bottleneck |

| Polling interval stays fixed | Job keeps requesting recent changes | Duplicate low-value traffic eats quota |

| Failures are retried globally | Queue resubmits whole batches | Healthy connectors get delayed by one noisy platform |

If you don’t isolate per-platform rate behavior, your fastest connectors inherit the problems of your slowest one.

That’s why “just add more workers” usually backfires. More concurrency helps only when the downstream can absorb it. In multi-cart eCommerce, the bottleneck is usually external and uneven. Good architecture respects that unevenness instead of trying to flatten it away.

Decoding API Rate Limit Signals

When an API starts pushing back, you need your client to read the signals instead of guessing. The most obvious one is HTTP 429, which means the server is rejecting requests because you crossed a traffic threshold. That threshold may be based on a fixed count, a moving window, a weighted quota, or burst behavior. Your code doesn’t need to know the internal algorithm to respond correctly. It needs to observe the response and adapt.

The first rule is simple. Never treat 429 like a generic transient failure. A timeout might justify an immediate retry on another connection. A rate-limit response usually means the server already told you to slow down.

The headers worth inspecting

Several APIs expose rate state directly in headers. The most useful are:

Retry-Aftertells you when it’s safe to try again.X-RateLimit-Limittells you the ceiling for the current window or policy.X-RateLimit-Remainingtells you what quota is left.X-RateLimit-Resettells you when the counter or window resets.

Across providers, the shape of these limits varies wildly. Tiger Open Platform uses historical data pull quotas from 20 to 2,000 requests depending on client qualification, while Insider Academy’s APIs range from 1 request per day to 5,000 requests per second, as described in Insider Academy’s API rate limit documentation. That spread is exactly why hard-coding one retry policy for every connector is fragile.

If you’re comparing service documentation patterns across platforms, resources like Gemini API rate limits are useful because they show how providers communicate usage boundaries to developers.

A practical Python example

import time

import requests

url = "https://example-api.com/orders"

response = requests.get(url, headers={"Authorization": "Bearer TOKEN"})

if response.status_code == 429:

retry_after = response.headers.get("Retry-After")

wait_seconds = int(retry_after) if retry_after and retry_after.isdigit() else 5

time.sleep(wait_seconds)

else:

limit = response.headers.get("X-RateLimit-Limit")

remaining = response.headers.get("X-RateLimit-Remaining")

reset = response.headers.get("X-RateLimit-Reset")

print("Status:", response.status_code)

print("Limit:", limit)

print("Remaining:", remaining)

print("Reset:", reset)

This example is intentionally basic. In production, you’d route the response metadata into a scheduler or request governor rather than sleeping inside a hot path. But the core behavior is right. Read the headers, change your pace, and avoid blind retries.

Don’t optimize your request volume before you can observe it. Headers are operational feedback, not decoration.

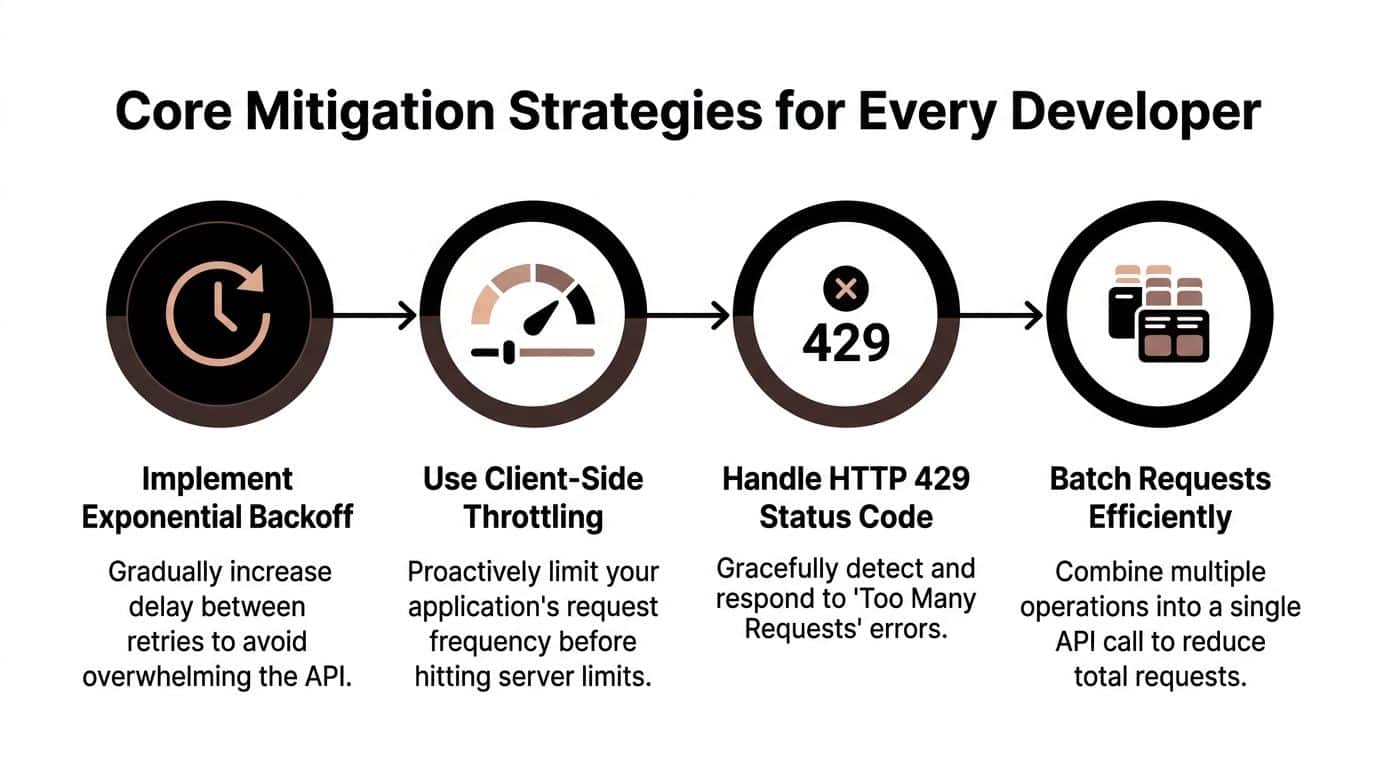

Core Mitigation Strategies for Every Developer

The first layer of defense isn’t exotic. It’s disciplined client behavior. Most integrations become dramatically more stable when you combine controlled retries, client-side throttling, and request reduction. The mistake is implementing these as isolated patches instead of a coherent policy.

Start with exponential backoff and jitter

If an API returns 429, waiting a fixed delay for every retry creates synchronized traffic. All your workers wake up together, hit the same endpoint again, and trigger the same rejection. That’s why exponential backoff with jitter works better.

Use this pattern:

- Retry only when the error is retryable.

- Prefer

Retry-Afterwhen the API sends it. - If no guidance is provided, increase delay between retries.

- Add randomness so clients don’t stampede at the same instant.

- Cap total retry time so one slow connector doesn’t block the whole pipeline.

A simple Python sketch:

import random

import time

import requests

def get_with_backoff(url, headers, max_retries=5):

delay = 1.0

for attempt in range(max_retries):

response = requests.get(url, headers=headers)

if response.status_code != 429:

return response

retry_after = response.headers.get("Retry-After")

if retry_after and retry_after.isdigit():

sleep_for = int(retry_after)

else:

sleep_for = delay + random.uniform(0, 0.5)

time.sleep(sleep_for)

delay = min(delay * 2, 30)

return response

Throttle before the server has to

Client-side throttling is quieter and cheaper than server-enforced rejection. Keep a per-connector request budget in memory or in a shared store, and make workers acquire permission before making outbound calls. That changes the system from “send then recover” to “pace then send.”

The same logic applies when deciding between polling and event-driven updates. The trade-off depends on platform behavior and workload shape. API2Cart’s comparison of API vs webhook integration patterns is useful if you’re deciding when polling is controllable and when events remove unnecessary calls.

Use batching and understand token buckets

Some APIs support bulk endpoints. Use them. Fewer requests means less header parsing, less retry overhead, and fewer opportunities to hit a threshold. Batching also helps when you’re moving catalog or order data in bursts.

The underlying rate-limiter many APIs use is often easier to understand with an arcade analogy. Think of a token bucket as a bucket of game tokens near the entrance. Each request spends one token. Tokens refill steadily over time. If you’ve saved up tokens, you can spend several quickly and handle a burst. But once the bucket is empty, you wait for more tokens to arrive.

According to Axway’s API quota discussion, the token bucket algorithm is well suited to bursty eCommerce traffic, allowing a burst size such as 500 TPS followed by a sustained rate, and once the burst depletes the bucket, excess traffic gets HTTP 429 responses to protect backend quality of service.

That’s why token buckets feel forgiving right up until they don’t. They allow short bursts by design. They are not permission for sustained overproduction.

A practical checklist:

- Batch reads when possible: Pull grouped changes instead of one record at a time.

- Separate read and write paths: Writes usually deserve stricter pacing.

- Throttle at the connector boundary: Don’t let internal worker speed dictate external call speed.

- Honor server feedback: If headers exist, your local assumptions are secondary.

Advanced Patterns for Resilient Data Syncs

Retries and throttles solve the first layer of instability. The next layer is architectural. If your integration handles many stores and many object types, reliability comes from how you shape work before it becomes outbound traffic.

Control parallelism instead of maximizing it

A common anti-pattern is one global worker pool blasting requests to every connector. That design is easy to code and hard to operate. One strict platform gets overwhelmed while another sits idle, and you have no clean way to tune either.

A better model is bounded parallelism per integration channel. Give each connector its own concurrency ceiling, then let a higher-level scheduler allocate work based on observed behavior. That way one marketplace can run with a narrow lane while another handles broader throughput.

Use this approach when:

- A platform throttles on concurrency: Limit in-flight requests, not just request count.

- Response times vary sharply: Slow connectors need smaller active pools.

- One store goes noisy: Localize the slowdown instead of contaminating all jobs.

Queue first, then dispatch. It’s easier to shape demand before a request is sent than to recover after a remote API rejects it.

Build a request queue that understands sync value

Not all requests deserve equal urgency. A shipment acknowledgment usually matters more than a catalog refresh. A low-value poll for unchanged customer data shouldn’t crowd out recent order imports.

That means your queue needs more than FIFO. It should understand:

| Request type | Suggested treatment | Why |

|---|---|---|

| Order import | Higher priority, low latency | Delays affect fulfillment workflows |

| Inventory update | Tight pacing, deduping enabled | Frequent changes can flood writes |

| Product catalog pull | Batch-friendly, cache-aware | Data is often large and less urgent |

| Customer lookup | Opportunistic, lower cost | Usually less time-sensitive |

Resource weighting matters. Standard guidance often underplays the fact that different operations don’t cost the same. In eCommerce, inventory syncs can consume 5-10x more compute than customer lookups, and that disparity causes 60% of enterprise throttling incidents, according to DataDome’s discussion of API rate limiting.

If your scheduler counts every request equally, it will make bad decisions. A bulk inventory write should “cost” more in your internal budget than a lightweight customer fetch.

Cache aggressively where freshness rules allow it

A lot of integrations burn quota on repeated reads that don’t need to happen. Store metadata, static catalog attributes, and recently fetched pages are common examples. Smart caching doesn’t just cut traffic. It protects your retry budget for calls that matter.

Three practical rules help:

- Cache reference data longer: Taxonomies and store settings usually change less often.

- Use change filters where available: Poll only what changed since the last successful checkpoint.

- Deduplicate within the queue: If five jobs ask for the same product state, one outbound call should satisfy all five.

For teams tuning real-time behavior, API2Cart’s overview of real-time API synchronization is a useful reference point for balancing event-driven updates with controlled polling in sync-heavy environments.

Polling versus webhooks is a control trade-off

Webhooks reduce unnecessary polling, but they aren’t a universal answer. They can arrive out of order, be retried by the sender, or fail if your receiver is down. Polling is more expensive, but it gives you control over cadence and recovery.

The stronger pattern is usually hybrid. Use webhooks for fast signals, then confirm state with targeted reads. Keep polling narrow, checkpointed, and rate-aware. That avoids blind trust in events while still keeping request volume under control.

How API2Cart Manages Rate Limit Complexity

At some point, teams have to decide whether rate-limit orchestration is part of their product or part of their plumbing. For a B2B SaaS company connecting to many carts and marketplaces, writing and maintaining bespoke traffic logic for every connector is expensive work that competes with actual product development.

A unified integration layer changes that trade-off. Instead of teaching your application the pacing rules of dozens of APIs, you route through one abstraction and let that layer absorb much of the connector-specific behavior. That doesn’t remove the need for good sync design on your side, but it does reduce the amount of platform-by-platform throttling logic your team must own.

Why gateway-level enforcement fits multi-cart work

For B2B SaaS, gateway-level enforcement scales better than in-code counters because it centralizes traffic policy, supports tiering, and exposes usage feedback. Best practices such as tiered limits like 100 req/min for free and 5000/hour for paid, request weighting, and headers like X-RateLimit-Remaining can also reduce support tickets by 50% through transparent quotas, according to REST API rate limit guidelines.

That principle maps well to multi-cart integration. If each connector has distinct traffic behavior, a gateway gives you one place to enforce:

- Per-app quotas

- Connector-aware throttling

- Retry and backoff policy

- Observability for remaining budget

- Isolation between noisy and healthy traffic lanes

An API proxy layer is often the technical shape that makes this manageable. API2Cart’s explanation of an API proxy server is relevant here because proxying is how many platforms centralize translation, routing, and traffic control across many downstream services.

Where a unified API helps an integration developer

API2Cart emerges as a practical option. It provides a single API for connecting to 60+ shopping carts and marketplaces and exposes 100+ methods for orders, products, customers, shipments, inventory, and catalog workflows. For a developer building OMS, WMS, PIM, ERP, or shipping integrations, that means less custom connector code and fewer places where store-specific throttling rules leak into business logic.

In practical terms, a unified layer helps with use cases like:

- Order import automation: One ingestion path instead of many store-specific polling loops.

- Inventory synchronization: Centralized control over high-frequency stock updates.

- Price and listing updates: Reduced connector variance when publishing changes.

- Reporting and analytics syncs: More consistent access patterns across stores.

The speed gain comes from reducing custom plumbing, not from bypassing limits. Good unified APIs still need to respect downstream quotas. The difference is that the complexity is concentrated in one integration boundary instead of spread across every connector your team maintains.

Building Future-Proof Integrations

The healthiest way to think about an api rate limit is as a stability contract. It tells you how fast the road can safely move, and your job is to build a vehicle that respects it under load.

That means designing for pacing from day one. Read 429 responses correctly. Honor headers. Queue requests before dispatch. Limit concurrency per connector. Cache low-value reads. Treat expensive operations as expensive. If your team also works on adjacent commerce flows, architecture habits from other domains matter too. For example, teams planning to integrate payment gateway workflows face the same need for disciplined retries, sequencing, and failure isolation.

The biggest shift is moving from reactive debugging to proactive traffic design. When you do that, failed jobs stop looking mysterious. They become predictable engineering problems with clear controls.

If your team is tired of building and maintaining one-off cart connectors, API2Cart is a practical way to unify access to many eCommerce platforms through one API so you can spend more time on sync logic, product workflows, and merchant-facing features instead of connector-specific plumbing.