Banking system integration stopped being a back-office concern a long time ago. It now sits in the critical path of product delivery, compliance, customer onboarding, fraud controls, and partner expansion.

The scale tells the story. In 2023, the global system integration market was valued at USD 434.47 billion, and the market was forecast to reach USD 1,046.9 billion by 2032. Within that market, BFSI held over 22.08% of share in 2023, the largest industry slice reported in the source, according to system integration market data from SNS Insider. For developers, that means integration work in finance isn't edge work. It's one of the largest software implementation problems in the enterprise stack.

Engineers often do not struggle because they are unable to call an API. They struggle because every bank, processor, identity system, and compliance workflow speaks a slightly different dialect, exposes different event models, and fails in different ways. The hard part isn't connectivity. It's building something maintainable when the environment is fragmented, regulated, and still anchored to old core systems.

That makes banking system integration a developer discipline, not just an architecture diagram. You need patterns that absorb inconsistency, security controls that hold under audit, and a delivery model that doesn't turn every new connection into a custom engineering project.

Why Banking Integration Is a Core Developer Skill in 2026

By 2026, open banking programs, embedded finance rollouts, and real-time payment expectations will have pushed financial connectivity into products that were never designed as banking software. That shift changes the developer job. Integration work now affects roadmap speed, support load, audit readiness, and how quickly a team can launch in a new market.

The integration layer is part of the product surface.

If an application fails to sync balances, post payment events late, mishandle KYC responses, or break ledger reconciliation, users do not separate that from the rest of the experience. They see one product that does not work reliably. For engineering teams, that means banking integration is no longer a specialist concern handed off to middleware teams. It sits inside day-to-day product delivery.

Why developers feel the pressure first

Developers usually inherit three constraints at once:

- Product teams want new connections fast. They need bank data, payouts, account verification, and onboarding flows to ship on a deadline.

- Risk and compliance teams need traceability. Authentication, consent handling, data retention, and audit logs all shape the implementation.

- The systems rarely behave alike. One bank offers clean APIs and event callbacks. Another still depends on file exchange, polling, or partial coverage with edge cases you only find in production.

That combination makes integration skill a multiplier for senior engineers. REST and JSON are the easy part. The hard part is handling retries, idempotency, reconciliation, partial failure, version drift, and inconsistent error models without turning each new connection into a custom rewrite.

2026 matters because several trends reach implementation maturity at the same time. Real-time payments are becoming a normal product requirement in more markets. Open banking standards are getting baked into commercial workflows instead of pilot projects. More non-bank software companies are also shipping embedded finance use cases as a standard feature, not an experiment. That pushes banking integration from a niche skill into a baseline capability for product teams.

The strategic layer behind the code

The business case is simple. Teams that integrate financial systems well ship faster and spend less time cleaning up exceptions after launch.

The trade-off is also simple. You can build one-off connectors for each institution and keep initial delivery moving, or you can invest in a normalized integration layer that absorbs differences between providers. The first option looks cheaper on sprint plans. The second usually wins by the third or fourth integration, when contract mapping, auth flows, monitoring, and support paths start repeating.

This is why the unified API model matters to developers. ECommerce teams learned this years ago. Instead of writing a separate integration for every store platform, they used a common abstraction to reduce connector sprawl. Banking teams face the same fragmentation problem across banks, processors, KYC providers, and ledger systems. A unified API does not remove every edge case, but it can move common logic into one layer and cut the amount of bespoke code your team has to own.

That same logic applies when teams use services that streamline multi-channel data via iPaaS. The value is not abstraction for its own sake. The value is fewer custom mappings, faster onboarding of new partners, and a lower maintenance burden when one provider changes its contract.

A practical rule holds up in nearly every banking program. The first integration proves demand. The fifth proves whether the team designed for scale.

Core Integration Architectures and Patterns

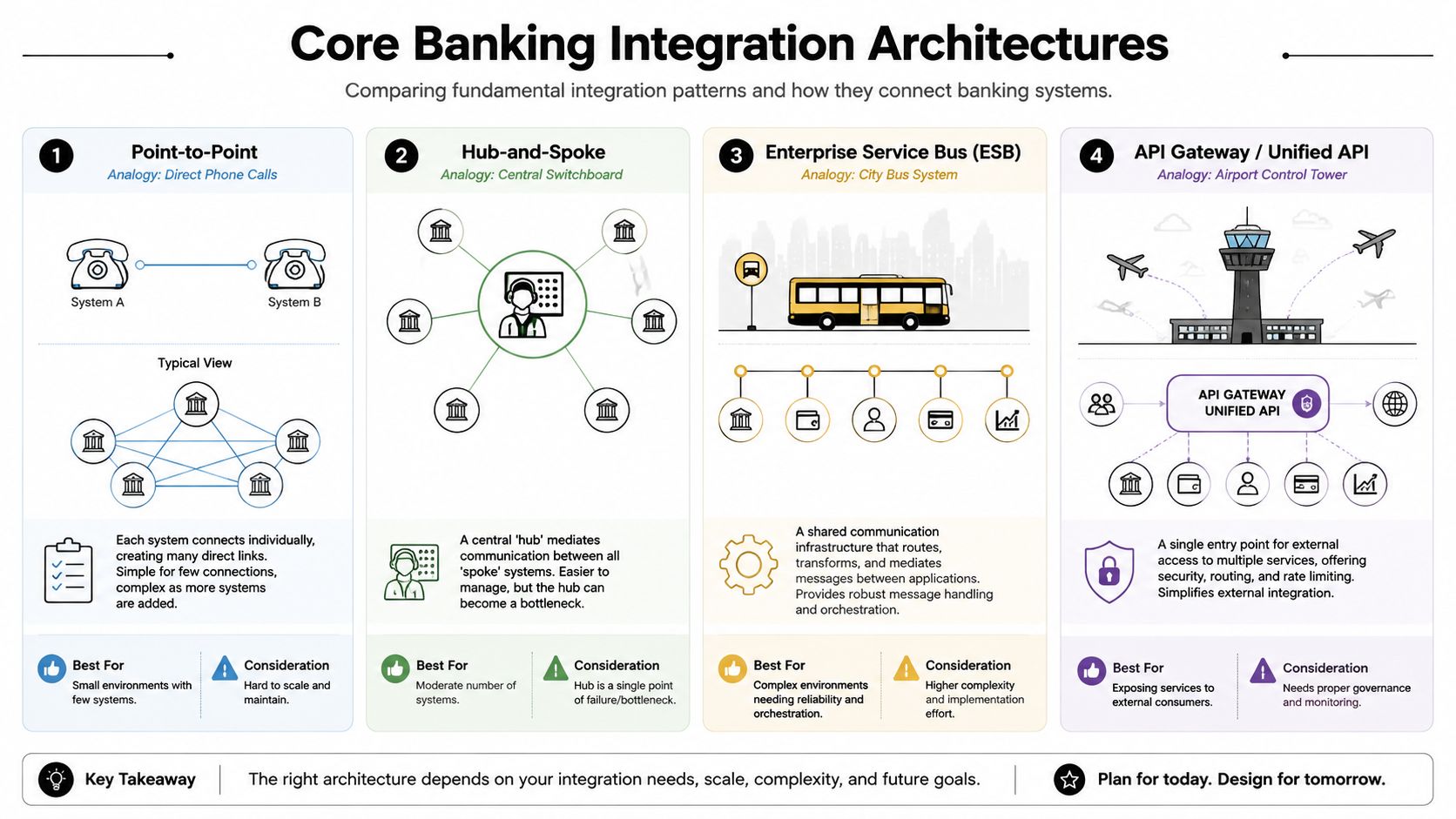

Most integration failures start with the wrong topology. Teams pick the fastest path for one connection, then discover they accidentally designed a maintenance problem.

Point-to-point works until it doesn't

Point-to-point is the direct wire. System A talks to System B. For a very small footprint, that's fine. It keeps latency low and avoids adding another platform in the middle.

The problem is scale. According to Itexus on banking integration patterns, point-to-point integration creates O(n²) complexity as systems are added, while API and ESB middleware architectures reduce management to O(n) through a central hub. That difference isn't abstract math. It's the difference between a manageable integration estate and a brittle one.

A direct connection model usually creates these issues:

- Tight coupling: A change in one API contract breaks one or more consumers.

- Repeated logic: Authentication, retries, transformation, and logging get rebuilt in every connector.

- Opaque failures: Troubleshooting spans many custom links instead of one managed exchange point.

Hub-and-spoke and ESB fix the sprawl

A hub-and-spoke model acts like a switchboard. Systems connect once to the hub, and the hub handles routing and mediation. An ESB extends that idea with message transformation, orchestration, and more reliable delivery control.

This works well when you need to normalize old and new systems in the same environment. You can map fields, enrich payloads, and isolate old interfaces from modern consumers.

The biggest architectural win in banking integration is often boring. Put translation, routing, and policy enforcement in one place so product teams stop rebuilding them everywhere else.

There is a trade-off. The middleware layer becomes a product in its own right. It needs governance, ownership, release discipline, and operational support. A badly governed hub can turn into a bottleneck.

API-led connectivity is the cleaner external contract

For teams exposing banking capabilities to apps, partners, or internal product squads, API-led design usually creates a better developer experience. You publish stable contracts at the edge and absorb backend inconsistency behind them.

That same logic applies in other fragmented domains. If you're trying to streamline multi-channel data via iPaaS, the value comes from reducing connector sprawl and standardizing how downstream systems consume information.

A practical pattern looks like this:

| Pattern | Best fit | Main risk |

|---|---|---|

| Point-to-point | One or two tightly scoped integrations | Dependency sprawl |

| Hub-and-spoke | Centralized mediation across several systems | Hub bottleneck |

| ESB | Complex routing and transformation needs | Governance overhead |

| API gateway or unified API | Stable access layer for many consumers | Requires strong contract discipline |

For teams designing secure mediation layers, an API proxy server pattern is often part of the answer. It gives you a controlled edge where routing, authentication, throttling, and observability can stay consistent even when backend services aren't.

Real-time and batch both still matter

Developers often want to force everything into real time. That's not always possible, and it isn't always necessary.

Use real-time flows where action speed changes outcomes, such as alerts, transaction screening, or user-facing balance changes. Keep batch where the source system only supports scheduled exports or where the business process tolerates delay. Mature banking system integration accepts both and designs reconciliation between them.

Understanding Key Banking Communication Standards

Architecture defines how systems connect. Standards define what the messages mean. Without shared standards, every integration becomes a custom translation project.

Why standards matter to developers

A common mistake is treating standards as compliance paperwork. They aren't. They reduce ambiguity in payloads, workflows, and security expectations.

In banking system integration, standards matter because they help teams answer practical questions early:

- Which fields are mandatory?

- What does a payment status mean?

- How should identities and permissions be represented?

- Which party owns consent, authentication, and audit evidence?

If you don't settle those questions with a standard or a clear internal contract, developers end up encoding assumptions in adapters. That's where long-term fragility starts.

The easiest way to think about ISO 20022 is as a structured language for financial messages. It gives institutions a more consistent way to represent payment, cash management, securities, and related financial data.

For developers, the payoff is simple. You spend less time writing one-off translation logic when the upstream and downstream systems already align on message structure and semantics. You still need mapping, but you avoid inventing a private dictionary for every integration.

Open banking standards turn access into a governed interface

Open banking changed the developer environment by making secure, permissioned data access part of the banking conversation instead of a special project. PSD2 is part of that reality because it pushed banks to support secure third-party access in regulated contexts.

That doesn't mean every bank implementation feels uniform. Far from it. But the model matters. It establishes a pattern where customer-permissioned access, identity, and auditability are first-class concerns.

A practical reference point is this open banking API guide, which helps frame how architecture, security, and data-sharing models fit together from a developer perspective.

Standards don't remove integration work. They remove avoidable translation work.

What standards don't solve

Standards don't fix poor source data, weak sandbox quality, or undocumented rate limits. They also don't guarantee event consistency across institutions. Teams still need normalization layers, validation logic, and careful versioning.

That said, standards give you a much better starting point. In a fragmented ecosystem, a shared language is one of the few things that prevents every connection from becoming a custom build.

Security work in banking integration isn't a hardening pass at the end. It's part of the design from the first endpoint you expose and the first field you map.

The integration boundary is where trust breaks first

Many legacy banking systems weren't designed for real-time data processing, even though modern use cases such as fraud detection and AML screening depend on it. According to SymphonyAI on legacy banking integration, successful integrations must address data standardization, tokenization, and compliance with PSD2, PCI DSS, and ISO 27001 at the integration boundary.

That phrase matters. The integration boundary is where inconsistent source data, security exposure, and policy drift usually show up first. If you don't enforce rules there, problems leak into every downstream service.

What developers should build in from day one

Strong implementations usually include these controls:

- Encrypt data in transit and at rest: Treat both as mandatory. A secure transport channel isn't enough if downstream stores, logs, or queues expose sensitive fields.

- Tokenize sensitive values: Keep raw financial or identity data out of services that don't need it.

- Validate and standardize inbound data: Normalize formats at entry points so your internal systems don't inherit source-level chaos.

- Generate audit trails automatically: Teams need a defensible record of who accessed what, when, and under which permission model.

- Segment privileges tightly: A service that reads balances shouldn't automatically gain write authority over payments or identity records.

Compliance is a software concern

Developers sometimes treat compliance as something legal and security teams "handle later." In practice, compliance shows up in schema design, retention logic, consent handling, event logs, and incident response.

A few implementation questions expose the actual work fast:

| Question | Why it matters |

|---|---|

| Can you trace every state change? | Audit and dispute resolution depend on this |

| Can you revoke access cleanly? | Consent and permission scope change over time |

| Can you isolate sensitive fields? | Reduces blast radius during incidents |

| Can you explain data lineage? | Regulators and internal risk teams will ask |

Teams that are preparing fintech startups for SOC 2 often discover the same lesson. You can't bolt operational trust onto an integration after launch. Logging, access control, change management, and evidence collection need to exist in the delivery process itself.

Build the audit trail while building the feature. Reconstructing it later is slow, expensive, and usually incomplete.

A Practical Integration Planning Checklist for Developers

Most banking integrations go off track before a single production request is sent. The project starts with vague requirements, hidden assumptions, and no agreement on failure behavior.

Start with discovery, not endpoint mapping

The first task isn't writing code. It's reducing ambiguity.

Ask for concrete answers to these questions:

Which business action triggers the integration?

"Sync transactions" is too vague. "Create a fraud review case when a screened payment is flagged" is specific enough to design around.Which system owns each field?

If account status, customer identity, and transaction status all come from different systems, define the source of truth before implementation.What are the timing expectations?

Immediate, near-real-time, hourly, and nightly batch all require different error handling and user expectations.What happens when the upstream system is wrong?

Banking data can be late, duplicated, incomplete, or reissued. Your product needs a policy for that.

Pick architecture based on failure tolerance

A clean design starts with a practical question: where do you want complexity to live?

If the connection count is small and the workflow is isolated, a direct adapter can be acceptable. If you'll support many banks, internal services, or regional variations, put abstraction in the middle and keep the product-facing contract stable.

Use this checklist before locking architecture:

- Connection growth: Are you planning for one institution or many?

- Transformation depth: Are you just passing fields through, or reconciling meaning across systems?

- Operational ownership: Who runs retries, monitoring, and contract versioning?

- Change frequency: How often will upstream interfaces drift?

Define implementation details early

At this stage, projects become real. A short written integration spec should answer:

Authentication model

Token exchange, certificate-based trust, delegated user consent, or service account access.Rate limiting and backoff

What happens when you hit throughput limits. Don't leave this to generic client defaults.Idempotency strategy

Can the same request be safely retried without duplicate business effects?Error taxonomy

Distinguish validation failures, transient upstream errors, auth failures, and business rule conflicts.Data reconciliation

Decide how you'll compare source and destination states and how often.

A good integration spec spends less time on happy-path requests and more time on state drift, retries, and ownership.

Test what production will actually do

Sandbox environments are useful, but they often hide the ugly parts. Don't assume sandbox behavior predicts production behavior.

Cover these test tracks:

- Contract tests: Verify field structure, null handling, and enum drift.

- Operational tests: Simulate timeouts, stale tokens, partial writes, and delayed callbacks.

- Security tests: Check auth scope, log hygiene, secret rotation, and access boundaries.

- Rollback tests: Practice the procedure for disabling or isolating the integration safely.

After launch, keep monitoring simple and actionable. Log request IDs, partner identifiers, correlation IDs, and state transitions in a way the support team can follow without reading source code.

How Unified APIs Accelerate Banking Integration

Analysts at Grand View Research found that core banking software made up more than 44% of banking system software revenue in 2022, and on-premise deployments accounted for over 58%. For developers, that usually means the same hard reality. Each new bank connection brings another set of protocols, data shapes, authentication rules, and operational quirks.

The unified API model changes what the team has to build

A unified API gives product and integration teams one stable contract above many inconsistent banking systems. Instead of writing and maintaining a separate adapter for every institution, the team targets one interface and lets the unified layer translate the details.

That shift matters because the expensive part of banking integration is rarely the first successful API call. The cost sits in all the repeated engineering around field mismatches, bank-specific authentication flows, version changes, and support incidents when one provider behaves differently from another. A good unified layer contains that variation in one place.

For the delivery team, the unit of work changes from "build another custom connector" to "map a new provider into the existing model." That is a smaller problem. It is easier to test, easier to document, and easier to roll out across multiple products.

Why developers care

Unified APIs reduce duplicated effort in four places that usually consume the schedule:

- Data normalization. Teams work with one account, customer, payment, or transaction model instead of reconciling every bank's naming and structure choices.

- Authentication handling. The abstraction layer can standardize token exchange, consent flows, certificate requirements, or session handling behind one contract.

- Change management. If one upstream bank revises an endpoint or payload, the fix stays inside the connector instead of forcing application teams to refactor.

- Partner rollout speed. Supporting the fifth or fiftieth institution starts to look like onboarding work, not a fresh integration project.

That is the practical developer experience advantage. Less connector sprawl. Fewer one-off edge cases in product code. Faster time-to-market when the business wants broader bank coverage.

The pattern already works in other fragmented integration domains

This abstraction model is proven outside banking. In eCommerce, API2Cart provides a single API that helps software vendors connect with many shopping carts and marketplaces without building each integration from scratch. Banking presents different regulatory and operational constraints, but the architectural principle is the same: standardize the contract that application developers see, and isolate source-specific complexity behind it.

For teams modernizing mixed environments, this pragmatic approach to API-led connectivity is the right framing. The goal is not to pretend the backend systems are uniform. The goal is to give developers a predictable interface while the abstraction layer handles inconsistency where it belongs.

The best unified APIs do not erase backend complexity. They keep it away from the application team.

The trade-off is real

A unified API is not free simplicity. It moves complexity into a shared layer that now needs clear ownership, connector standards, version policy, and support discipline.

I have seen unified models fail for one predictable reason. They become too generic. The team tries to force every bank into the same lowest-common-denominator schema, then discovers that payment status rules, consent scopes, or account capabilities do not line up cleanly. When that happens, developers either lose important functionality or start bypassing the abstraction.

The fix is architectural discipline. Standardize the common path, but leave controlled extension points for bank-specific capabilities. That gives application teams a consistent default without blocking advanced use cases.

When teams strike that balance, banking integration stops behaving like a series of custom projects. It starts behaving like a platform capability. That is the point of the unified API model, and it is why it can shorten delivery cycles so sharply in a fragmented banking environment.

Common Pitfalls and How to Mitigate Them

Most banking integration failures aren't caused by one dramatic mistake. They come from a set of predictable misjudgments that stack up.

Pitfall one: designing for ideal APIs instead of real cores

A lot of teams architect for cloud-native assumptions, then discover the critical system is older, centralized, and on-premise. That isn't a niche edge case. According to Grand View Research on banking system software, the core banking system software segment accounted for over 44.0% of market revenue in 2022, and on-premise deployment represented over 58.0% of the market.

That means your integration design has to tolerate older operating models. Build an abstraction layer over the core. Don't assume you can replace it, and don't expose its quirks directly to product teams if you can avoid it.

Pitfall two: underestimating data inconsistency

Banking system integration often breaks on field semantics, not transport. Dates arrive in different formats. Status values look similar but mean different things. One system sends updates as events. Another only exposes snapshots.

Mitigation starts with explicit normalization:

- Create a canonical data model for your application boundary

- Map source-specific meaning instead of just matching field names

- Track lineage so support teams can trace where a value came from

- Quarantine invalid records rather than automatically coercing them

Pitfall three: treating project scope as fixed when it isn't

Integration projects attract extra requirements. Once the pipe exists, every stakeholder wants one more field, one more event, one more write-back action.

Control that with release boundaries and ownership. Separate the launch contract from the backlog. If you don't, the integration becomes a general-purpose platform before it's stable enough to deserve that role.

Pitfall four: weak operational planning

Some teams launch with code coverage and almost no operational readiness. Then the first outage hits, and nobody knows whether the problem is auth expiration, upstream throttling, payload drift, or queue lag.

A practical mitigation set is small but important:

| Risk | Mitigation |

|---|---|

| Silent data drift | Reconciliation jobs and schema validation |

| Repeated duplicate actions | Idempotency keys and replay-safe handlers |

| Unknown failure causes | Correlation IDs and structured logs |

| Unsafe releases | Feature flags and rollback procedures |

Mature teams don't ask only, "Does the integration work?" They ask, "Can we explain it, monitor it, and recover it under pressure?"

The teams that do banking integration well aren't always the ones with the most code. They're the ones that reduce surprises before production.

If your team is building software that has to connect with many external systems, the unified API model is worth serious consideration. API2Cart gives B2B software vendors one API for connecting to many commerce platforms, which makes it a practical reference point for developers thinking about how to reduce connector sprawl, standardize data access, and ship integrations faster in other fragmented domains, including financial workflows.